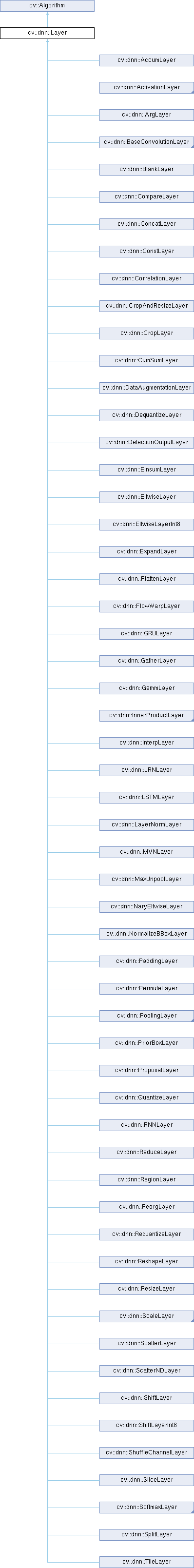

This interface class allows to build new Layers - are building blocks of networks. More...

#include <opencv2/dnn/dnn.hpp>

Public Member Functions | |

| Layer () | |

| Layer (const LayerParams ¶ms) | |

| Initializes only name, type and blobs fields. | |

| virtual | ~Layer () |

| virtual void | applyHalideScheduler (Ptr< BackendNode > &node, const std::vector< Mat * > &inputs, const std::vector< Mat > &outputs, int targetId) const |

| Automatic Halide scheduling based on layer hyper-parameters. | |

| virtual void | finalize (const std::vector< Mat * > &input, std::vector< Mat > &output) |

| Computes and sets internal parameters according to inputs, outputs and blobs. | |

| std::vector< Mat > | finalize (const std::vector< Mat > &inputs) |

| This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts. | |

| void | finalize (const std::vector< Mat > &inputs, std::vector< Mat > &outputs) |

| This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts. | |

| virtual void | finalize (InputArrayOfArrays inputs, OutputArrayOfArrays outputs) |

| Computes and sets internal parameters according to inputs, outputs and blobs. | |

| virtual void | forward (InputArrayOfArrays inputs, OutputArrayOfArrays outputs, OutputArrayOfArrays internals) |

Given the input blobs, computes the output blobs. | |

| virtual void | forward (std::vector< Mat * > &input, std::vector< Mat > &output, std::vector< Mat > &internals) |

Given the input blobs, computes the output blobs. | |

| void | forward_fallback (InputArrayOfArrays inputs, OutputArrayOfArrays outputs, OutputArrayOfArrays internals) |

Given the input blobs, computes the output blobs. | |

| virtual int64 | getFLOPS (const std::vector< MatShape > &inputs, const std::vector< MatShape > &outputs) const |

| virtual bool | getMemoryShapes (const std::vector< MatShape > &inputs, const int requiredOutputs, std::vector< MatShape > &outputs, std::vector< MatShape > &internals) const |

| virtual void | getScaleShift (Mat &scale, Mat &shift) const |

| Returns parameters of layers with channel-wise multiplication and addition. | |

| virtual void | getScaleZeropoint (float &scale, int &zeropoint) const |

| Returns scale and zeropoint of layers. | |

| virtual Ptr< BackendNode > | initCann (const std::vector< Ptr< BackendWrapper > > &inputs, const std::vector< Ptr< BackendWrapper > > &outputs, const std::vector< Ptr< BackendNode > > &nodes) |

| Returns a CANN backend node. | |

| virtual Ptr< BackendNode > | initCUDA (void *context, const std::vector< Ptr< BackendWrapper > > &inputs, const std::vector< Ptr< BackendWrapper > > &outputs) |

| Returns a CUDA backend node. | |

| virtual Ptr< BackendNode > | initHalide (const std::vector< Ptr< BackendWrapper > > &inputs) |

| Returns Halide backend node. | |

| virtual Ptr< BackendNode > | initNgraph (const std::vector< Ptr< BackendWrapper > > &inputs, const std::vector< Ptr< BackendNode > > &nodes) |

| virtual Ptr< BackendNode > | initTimVX (void *timVxInfo, const std::vector< Ptr< BackendWrapper > > &inputsWrapper, const std::vector< Ptr< BackendWrapper > > &outputsWrapper, bool isLast) |

| Returns a TimVX backend node. | |

| virtual Ptr< BackendNode > | initVkCom (const std::vector< Ptr< BackendWrapper > > &inputs, std::vector< Ptr< BackendWrapper > > &outputs) |

| virtual Ptr< BackendNode > | initWebnn (const std::vector< Ptr< BackendWrapper > > &inputs, const std::vector< Ptr< BackendNode > > &nodes) |

| virtual int | inputNameToIndex (String inputName) |

| Returns index of input blob into the input array. | |

| virtual int | outputNameToIndex (const String &outputName) |

| Returns index of output blob in output array. | |

| void | run (const std::vector< Mat > &inputs, std::vector< Mat > &outputs, std::vector< Mat > &internals) |

| Allocates layer and computes output. | |

| virtual bool | setActivation (const Ptr< ActivationLayer > &layer) |

| Tries to attach to the layer the subsequent activation layer, i.e. do the layer fusion in a partial case. | |

| void | setParamsFrom (const LayerParams ¶ms) |

| Initializes only name, type and blobs fields. | |

| virtual bool | supportBackend (int backendId) |

| Ask layer if it support specific backend for doing computations. | |

| virtual Ptr< BackendNode > | tryAttach (const Ptr< BackendNode > &node) |

| Implement layers fusing. | |

| virtual bool | tryFuse (Ptr< Layer > &top) |

| Try to fuse current layer with a next one. | |

| virtual bool | tryQuantize (const std::vector< std::vector< float > > &scales, const std::vector< std::vector< int > > &zeropoints, LayerParams ¶ms) |

| Tries to quantize the given layer and compute the quantization parameters required for fixed point implementation. | |

| virtual void | unsetAttached () |

| "Detaches" all the layers, attached to particular layer. | |

| virtual bool | updateMemoryShapes (const std::vector< MatShape > &inputs) |

Public Member Functions inherited from cv::Algorithm Public Member Functions inherited from cv::Algorithm | |

| Algorithm () | |

| virtual | ~Algorithm () |

| virtual void | clear () |

| Clears the algorithm state. | |

| virtual bool | empty () const |

| Returns true if the Algorithm is empty (e.g. in the very beginning or after unsuccessful read. | |

| virtual String | getDefaultName () const |

| virtual void | read (const FileNode &fn) |

| Reads algorithm parameters from a file storage. | |

| virtual void | save (const String &filename) const |

| void | write (const Ptr< FileStorage > &fs, const String &name=String()) const |

| virtual void | write (FileStorage &fs) const |

| Stores algorithm parameters in a file storage. | |

| void | write (FileStorage &fs, const String &name) const |

Public Attributes | |

| std::vector< Mat > | blobs |

| List of learned parameters must be stored here to allow read them by using Net::getParam(). | |

| String | name |

| Name of the layer instance, can be used for logging or other internal purposes. | |

| int | preferableTarget |

| prefer target for layer forwarding | |

| String | type |

| Type name which was used for creating layer by layer factory. | |

Additional Inherited Members | |

Static Public Member Functions inherited from cv::Algorithm Static Public Member Functions inherited from cv::Algorithm | |

| template<typename _Tp > | |

| static Ptr< _Tp > | load (const String &filename, const String &objname=String()) |

| Loads algorithm from the file. | |

| template<typename _Tp > | |

| static Ptr< _Tp > | loadFromString (const String &strModel, const String &objname=String()) |

| Loads algorithm from a String. | |

| template<typename _Tp > | |

| static Ptr< _Tp > | read (const FileNode &fn) |

| Reads algorithm from the file node. | |

Protected Member Functions inherited from cv::Algorithm Protected Member Functions inherited from cv::Algorithm | |

| void | writeFormat (FileStorage &fs) const |

Detailed Description

This interface class allows to build new Layers - are building blocks of networks.

Each class, derived from Layer, must implement allocate() methods to declare own outputs and forward() to compute outputs. Also before using the new layer into networks you must register your layer by using one of LayerFactory macros.

Constructor & Destructor Documentation

◆ Layer() [1/2]

| cv::dnn::Layer::Layer | ( | ) |

◆ Layer() [2/2]

|

explicit |

◆ ~Layer()

|

virtual |

Member Function Documentation

◆ applyHalideScheduler()

|

virtual |

Automatic Halide scheduling based on layer hyper-parameters.

- Parameters

-

[in] node Backend node with Halide functions. [in] inputs Blobs that will be used in forward invocations. [in] outputs Blobs that will be used in forward invocations. [in] targetId Target identifier

- See also

- BackendNode, Target

Layer don't use own Halide::Func members because we can have applied layers fusing. In this way the fused function should be scheduled.

◆ finalize() [1/4]

|

virtual |

Computes and sets internal parameters according to inputs, outputs and blobs.

- Parameters

-

[in] input vector of already allocated input blobs [out] output vector of already allocated output blobs

If this method is called after network has allocated all memory for input and output blobs and before inferencing.

◆ finalize() [2/4]

This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts.

◆ finalize() [3/4]

This is an overloaded member function, provided for convenience. It differs from the above function only in what argument(s) it accepts.

◆ finalize() [4/4]

|

virtual |

Computes and sets internal parameters according to inputs, outputs and blobs.

- Parameters

-

[in] inputs vector of already allocated input blobs [out] outputs vector of already allocated output blobs

If this method is called after network has allocated all memory for input and output blobs and before inferencing.

◆ forward() [1/2]

|

virtual |

Given the input blobs, computes the output blobs.

- Parameters

-

[in] inputs the input blobs. [out] outputs allocated output blobs, which will store results of the computation. [out] internals allocated internal blobs

◆ forward() [2/2]

|

virtual |

Given the input blobs, computes the output blobs.

- Parameters

-

[in] input the input blobs. [out] output allocated output blobs, which will store results of the computation. [out] internals allocated internal blobs

◆ forward_fallback()

| void cv::dnn::Layer::forward_fallback | ( | InputArrayOfArrays | inputs, |

| OutputArrayOfArrays | outputs, | ||

| OutputArrayOfArrays | internals | ||

| ) |

Given the input blobs, computes the output blobs.

- Parameters

-

[in] inputs the input blobs. [out] outputs allocated output blobs, which will store results of the computation. [out] internals allocated internal blobs

◆ getFLOPS()

|

inlinevirtual |

◆ getMemoryShapes()

|

virtual |

◆ getScaleShift()

Returns parameters of layers with channel-wise multiplication and addition.

- Parameters

-

[out] scale Channel-wise multipliers. Total number of values should be equal to number of channels. [out] shift Channel-wise offsets. Total number of values should be equal to number of channels.

Some layers can fuse their transformations with further layers. In example, convolution + batch normalization. This way base layer use weights from layer after it. Fused layer is skipped. By default, scale and shift are empty that means layer has no element-wise multiplications or additions.

◆ getScaleZeropoint()

|

virtual |

Returns scale and zeropoint of layers.

- Parameters

-

[out] scale Output scale [out] zeropoint Output zeropoint

By default, scale is 1 and zeropoint is 0.

◆ initCann()

|

virtual |

Returns a CANN backend node.

- Parameters

-

inputs input tensors of CANN operator outputs output tensors of CANN operator nodes nodes of input tensors

◆ initCUDA()

|

virtual |

Returns a CUDA backend node.

- Parameters

-

context void pointer to CSLContext object inputs layer inputs outputs layer outputs

◆ initHalide()

|

virtual |

Returns Halide backend node.

- Parameters

-

[in] inputs Input Halide buffers.

- See also

- BackendNode, BackendWrapper

Input buffers should be exactly the same that will be used in forward invocations. Despite we can use Halide::ImageParam based on input shape only, it helps prevent some memory management issues (if something wrong, Halide tests will be failed).

◆ initNgraph()

|

virtual |

◆ initTimVX()

|

virtual |

Returns a TimVX backend node.

- Parameters

-

timVxInfo void pointer to CSLContext object inputsWrapper layer inputs outputsWrapper layer outputs isLast if the node is the last one of the TimVX Graph.

◆ initVkCom()

|

virtual |

◆ initWebnn()

|

virtual |

◆ inputNameToIndex()

|

virtual |

Returns index of input blob into the input array.

- Parameters

-

inputName label of input blob

Each layer input and output can be labeled to easily identify them using "%<layer_name%>[.output_name]" notation. This method maps label of input blob to its index into input vector.

Reimplemented in cv::dnn::LSTMLayer.

◆ outputNameToIndex()

|

virtual |

Returns index of output blob in output array.

- See also

- inputNameToIndex()

Reimplemented in cv::dnn::LSTMLayer.

◆ run()

| void cv::dnn::Layer::run | ( | const std::vector< Mat > & | inputs, |

| std::vector< Mat > & | outputs, | ||

| std::vector< Mat > & | internals | ||

| ) |

Allocates layer and computes output.

- Deprecated:

- This method will be removed in the future release.

◆ setActivation()

|

virtual |

Tries to attach to the layer the subsequent activation layer, i.e. do the layer fusion in a partial case.

- Parameters

-

[in] layer The subsequent activation layer.

Returns true if the activation layer has been attached successfully.

◆ setParamsFrom()

| void cv::dnn::Layer::setParamsFrom | ( | const LayerParams & | params | ) |

◆ supportBackend()

|

virtual |

Ask layer if it support specific backend for doing computations.

- Parameters

-

[in] backendId computation backend identifier.

- See also

- Backend

◆ tryAttach()

|

virtual |

Implement layers fusing.

- Parameters

-

[in] node Backend node of bottom layer.

- See also

- BackendNode

Actual for graph-based backends. If layer attached successfully, returns non-empty cv::Ptr to node of the same backend. Fuse only over the last function.

◆ tryFuse()

Try to fuse current layer with a next one.

- Parameters

-

[in] top Next layer to be fused.

- Returns

- True if fusion was performed.

◆ tryQuantize()

|

virtual |

Tries to quantize the given layer and compute the quantization parameters required for fixed point implementation.

- Parameters

-

[in] scales input and output scales. [in] zeropoints input and output zeropoints. [out] params Quantized parameters required for fixed point implementation of that layer.

- Returns

- True if layer can be quantized.

◆ unsetAttached()

|

virtual |

"Detaches" all the layers, attached to particular layer.

◆ updateMemoryShapes()

|

virtual |

Member Data Documentation

◆ blobs

| std::vector<Mat> cv::dnn::Layer::blobs |

List of learned parameters must be stored here to allow read them by using Net::getParam().

◆ name

| String cv::dnn::Layer::name |

Name of the layer instance, can be used for logging or other internal purposes.

◆ preferableTarget

| int cv::dnn::Layer::preferableTarget |

prefer target for layer forwarding

◆ type

| String cv::dnn::Layer::type |

Type name which was used for creating layer by layer factory.

The documentation for this class was generated from the following file:

- opencv2/dnn/dnn.hpp

1.9.6

1.9.6